Today Bitcoin.com releases the open source SLP Indexer Server for indexing SLP tokens on Bitcoin Cash. It’s written in Java with scalability in mind.

I’m going to explain the validation strategy, and why it’s different from other existing systems powering the Bitcoin Cash SLP ecosystem today.

Current systems that indexes SLP transactions do a full verification of the Directed Acyclic Graph, also known as the DAG. Full DAG search does not scale very well as it tends to take a very long time for large graphs. The way current SLP validators operate won’t work well long term with increasing usage of SLP. Electron Cash is already having trouble validating SPICE tokens due to very long verification chains. Caching can help speed it up short term, but if someone would construct a very large block filled with SLP transactions, it would be very computationally heavy to validate all transactions, partially because all transactions are ordered lexicographically (CTOR) and not in the order the DAG flows.

But don’t worry. There is a way to work around this.

If you take a step back and think about it, the transaction order doesn’t matter. But you will need to give up on the idea of traversing the whole DAG. Bitcoin by its essence is UTXO based. The UTXO set is one of the greater inventions in Bitcoin. We can apply the CTOR validation strategy to attack this problem.

The way a bitcoin full node validates a block is not to traverse the DAG, but instead it summarizes all transactions in random order and calculates a final net value. If the summary doesn’t add up, the block is invalid. First, the node adds all outputs to the UTXO set, then in no particular order, validates that each transaction has a correct balance between inputs and outputs (so no bitcoin is created out of thin air) and other consensus rules. By first assuming every input is valid, all transactions can be validated using multiple threads where the order doesn’t matter. If one incorrect output is found, the whole block is discarded as invalid. This method is best described as “valid until proven invalid”.

If one normal BCH transaction is invalid, the whole block is invalid. With SLP, an invalid SLP transaction is just a normal BCH transaction. SLP uses a metadata layer scheme to color standard BCH utxos. The UTXO set for SLP is the same as normal BCH, but with extra metadata for token id and token amount.

If a SLP transaction is invalid, the UTXO coloring information is discarded and the outputs becomes just plain BCH outputs. This causes a few problems for us when we write a validator. Unlike plain BCH, an invalid SLP transaction doesn’t invalidate the block, and it doesn’t necessarily invalidate all the children of that transaction. We can apply the multi threaded randomly ordered validation technique for SLP until we find an invalid transaction. Instead of marking the whole block as invalid, we will need to recursively re-validate the children. We can’t simply invalidate them, because an invalid parent can have a valid child in SLP since the invalid SLP input just gets re-classified to a plain BCH input.

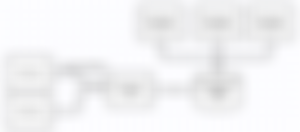

Here is an example.

Transaction C consists of two inputs, 100 SPICE + 200 SPICE. The outputs consist of just 200 SPICE. If both inputs are valid SLP UTXO, we will burn 100 SPICE. But if one output is invalid, then we just have 200 SPICE gross input, and 200 SPICE gross output.

If we validate transaction C before transaction A, we assume at first that all inputs are valid, and a net amount of 100 SPICE is burned. When we validate transaction A, we notice that the SLP UTXO is invalid (it only has 50 SPICE inputs, so the 100 SPICE is not a valid output and 50 SPICE got burned) and the outputs are re-classified as normal BCH outputs. Because of this, we are forced to re-validate the children as they could be invalid.

After validating the whole block, the DAG looks like this:

Transaction C does not burn any tokens and ends up with a net amount of 0 instead of -100. If transaction C would have a gross output of 300 SPICE, it would have been invalid as well.

Under normal circumstances we can validate all transactions in a block in linear time if no transaction has more than one invalid child. The potential performance hit we could encounter would be if we have a long chain of children after an invalid transaction. In the worst case scenario we end up with 1->k=25 Σ 25k children that need to be re-validated if someone builds a block filled with one invalid parent and otherwise valid children. Please keep in mind that this is only during the initial block download. All unconfirmed transactions are verified immediately as soon as our software sees it on the network. When a new block arrives it will only validate transactions not seen before.

With the current limit of 25 unconfirmed chained transactions, worst case we would end up with re-validating 7500 children.

The Bitcoin.com SLP Indexer can validate an unconfirmed SLP transaction in around 1 ms. Our software has been running in production for months already, powering our new Bitcoin.com Wallet backend.

Bitcoin.com is happy to release SLP Indexer Server open source for the Bitcoin Cash community. It’s very easy to set up, runs on MongoDB and scales well. It consists of one application that validates the SLP transactions and saves everything in MongoDB, and one API application. The first part is called the Bitcoin.com SLP Indexer Service. The other part is a read-only REST API layer called Bitcoin.com SLP API. The SLP API Service can scale linearly with MongoDB and is able to serve around 20k requests per second uncached on a M5.xlarge server on AWS.

We invite exchanges, wallets, gaming platforms, and SLP supported applications to try this out. We’re confident in its ability to scale to your business needs.

We have planned a full security audit in January to make sure the validator is fully compatible with other SLP validators out there.

The software is written in Java and made from scratch with no dependencies on current JavaScript based SLP libraries. It’s using bitcoinj to connect to the BCH network and can run without dedicated full nodes. Only the SLP data is saved to the database, so the amount of data that remains on the server is small.

Bitcoin.com will offer this service as a cloud service and will be a part of our public REST API. Anyone who wants to run it themselves on their own servers can also do that.

The SLP Indexer Server can be found here.

Emil Oldenburg

CTO, Bitcoin.com

this shit is over my head